The news is just in about Adobe being set to acquire Day Software (see also the FAQ). Assuming the deal goes through, it looks like I’ll be working for Adobe by the end of this year. I’m an open source developer, so I’m looking forward to finding out how committed Adobe is in supporting the open development model we’re using for many parts of Day products.

The first comments from Erik Larson, a senior director of product management and strategy at Adobe, seem promising and he also asked what the deal should mean for open source. This is my response from the perspective of the open source projects I’m involved in.

First and foremost I’m looking forward to continuing the open and standards-based development of our key technologies like Apache Jackrabbit and Apache Sling. There’s no way we’d be able to maintain the current level of innovation and productivity in these key parts of our product infrastructure without our symbiotic relationship with the open source community.

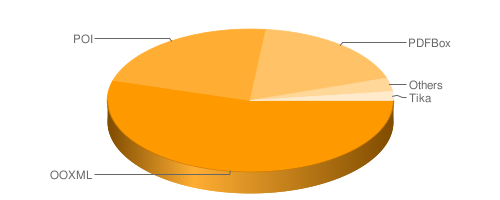

Second, I’m hoping that our experience and involvement with open source projects will help Adobe better interact with the various open source efforts that leverage Adobe standards and technologies like XMP, PDF and Flash. The Apache Software Foundation is a home to a growing collection of digital media projects like PDFBox, FOP, Tika, Batik and Sanselan, all of which are in one way or another related to Adobe’s business. For example as a committer and release manager of the Apache PDFBox project I’d much appreciate better access to Adobe’s deep technical PDF know-how. Similarly, in Apache Tika we’re considering using XMP as our metadata standard, and better access to and co-operation with the people behind Adobe’s XMP toolkit SDK (see more below) would be highly valuable.

It would be great to see Adobe becoming more proactive in reaching out and supporting such grass-roots efforts that leverage their technologies. I’ve dealt with Adobe lawyers on such cases before with good results but it did take some time before I found the correct people to contact. Another area of improvement would be to make freely redistributable Adobe IP more easily accessible for external developers by pushing them out to central repositories like Maven Central, RubyGems or CPAN, for example like I did when making PDF core font information available on Maven Central.

Finally, it would be great to see Adobe going further in embracing an open development model for some of their codebases like the XMP toolkit SDK that they already release under open source licenses. I’d love to champion or mentor the effort, should Adobe be willing to bring the XMP toolkit to the Apache Incubator!

“Just like Arthur Dent, who after inserting a Babel fish in his ear could understand Vogon poetry, a computer program that uses Tika can understand Microsoft Word documents.” This is how Tika in Action, our book on Apache Tika, introduces it’s subject. Download the freely available first chapter to read the the full introduction.

“Just like Arthur Dent, who after inserting a Babel fish in his ear could understand Vogon poetry, a computer program that uses Tika can understand Microsoft Word documents.” This is how Tika in Action, our book on Apache Tika, introduces it’s subject. Download the freely available first chapter to read the the full introduction.